When was the last time a PR actually merged the same day it was opened? If you're like most teams, it's been a while. Code review has turned into this multi-day game of tag where nobody's quite sure whose turn it is. Your engineers are stuck waiting on feedback, reviewers are drowning in diffs, and nothing's shipping as fast as it should. The path to reducing PR time isn't about working harder or hiring more people. It's about fixing the seven places where work quietly stalls out.

TLDR:

- Keep PRs under 225 lines of code to cut review time and improve approval confidence.

- Set an 8-hour review SLA to prevent PRs from sitting idle for days.

- Cap engineers at 2 active PRs each to reduce context switching and bottlenecks.

- AI code review tools cut task completion time by up to 50% by catching issues automatically.

- Alloy lets you experiment and validate changes in isolated sandboxes before merging to production.

Keep Pull Requests Small and Focused

The size of your pull requests matters more than most teams realize. Research shows that elite engineering teams keep PRs under 225 lines of code, while strong teams stay below 400. The gap in review speed between those thresholds is real.

Consider the reviewer's perspective. A 1,000-line PR requires holding an enormous amount of context in mind at once. A 200-line PR is something a reviewer can reason about clearly, approve quickly, and feel confident in.

Smaller PRs also get picked up faster. Reviewers are far less likely to procrastinate on a focused change that touches one feature than a sprawling diff that rewrites half the codebase. Breaking work into smaller, scoped chunks is one of the most direct ways to cut your PR cycle time.

What "Small and Focused" Actually Looks Like in Practice

- One logical change per PR, not one ticket per PR. A ticket might contain several independent changes that can ship separately.

- Avoid mixing refactors with feature work. Reviewers need a clear mental model of what they are approving.

- If a PR description requires more than three sentences to explain scope, it is probably doing too much.

Set Explicit Review Time Expectations

Without a stated expectation, PRs just sit. Reviewers assume someone else will get to it, push it to tomorrow, and suddenly a simple change has been idle for two days.

The fix is a team agreement with real accountability. Set a target: 95% of PRs reviewed within 8 hours. That sounds strict until you compare it to where most teams actually land. Median time to first review sits at 15 hours across organizations, nearly two full business days before anyone even looks at a change.

Making this expectation explicit changes behavior. Post it in your team handbook, add it to onboarding, and track it in your engineering metrics. When reviewers know the number, they tend to hit it.

| Metric | Elite Teams | Strong Teams | Industry Median | Target to Set |

|---|---|---|---|---|

| PR Size (Lines of Code) | Under 225 lines | Under 400 lines | 500+ lines | Keep under 225 lines for fastest reviews |

| Time to First Review | Under 4 hours | Under 8 hours | 15 hours (nearly 2 business days) | 95% of PRs reviewed within 8 hours |

| Active PRs per Engineer | 1-2 PRs maximum | 2-3 PRs maximum | 3+ PRs common | Cap at 2 active PRs to reduce context switching |

| Review Completion Rate | 95%+ within SLA | 80-90% within SLA | No defined SLA | Track and aim for 95% within 8-hour window |

| Task Completion Speed with AI Tools | Up to 50% faster | 30-40% faster | No AI assistance baseline | Implement AI code review for automatic issue detection |

Limit Work in Progress Across Your Team

Too much concurrent work gums up the entire review pipeline. When every engineer has three open PRs waiting for feedback, reviewers face constant context switching and nothing actually ships.

WIP limits fix this by capping how many items can be active at any stage. A common starting point is two in-progress PRs per engineer. The rule is simple: before opening a new PR, close one that's already waiting for review.

This creates a pull-based system where finishing takes priority over starting. Bottlenecks become visible quickly, and the team's focus moves from opening new work to completing what's already in motion.

A few ways to apply WIP limits in practice:

- Cap open PRs per engineer at two, so reviewers never face an overwhelming queue of context to absorb at once.

- Use your project board to surface idle reviews that have gone quiet, making it easy to identify where work is stalling.

- Block new branch creation until active PRs are merged or closed, reinforcing the priority of completion over starting fresh work.

Use Automation and AI Code Review Tools

AI-powered code review tools have become one of the most reliable ways to reduce PR time without adding headcount. These tools analyze pull requests automatically, catching bugs, style violations, and potential regressions before a human reviewer even opens the diff.

The time savings are real. According to McKinsey, AI-assisted developers complete tasks up to 50% faster than those working without AI support. For code review in particular, that speed carries over into fewer back-and-forth cycles and faster approvals.

What to Look for in an AI Code Review Tool

Not every tool delivers the same value. When choosing options, consider:

- Context-aware suggestions that understand your codebase instead of applying generic rules across every project

- Integration with your existing GitHub workflow so reviews happen inside the tools your team already uses

- Customizable rule sets that reflect your team's standards, not a one-size-fits-all checklist

- Actionable feedback that reviewers can act on immediately, reducing the clarification loops that quietly slow approvals down

The right tool catches the low-hanging issues automatically, freeing senior engineers to focus on architecture and logic.

Improve Communication and PR Context

Reviewers move faster when they understand why a change exists, beyond what changed. A PR with a vague title and no description forces reviewers to reverse-engineer intent from the diff, which wastes time and invites unnecessary questions.

A good PR description answers three things upfront: what problem this solves, what approach was taken, and how to verify it works. Adding a short testing checklist or a screen recording cuts the clarification cycle dramatically. Reviewers can approve with confidence without asking a follow-up that stalls the PR for another half day.

Some teams use a PR template to make this the default. When every PR follows the same structure, reviewing becomes faster because the information is always in the same place.

What a Strong PR Template Includes

A well-structured template removes guesswork for both the author and the reviewer. Most high-functioning teams include a few consistent fields:

- A short summary of the problem being solved, so reviewers immediately understand the motivation without reading the full diff.

- The approach taken and any alternatives that were considered and ruled out.

- A testing checklist outlining how to verify the change works as expected.

- Any visual evidence such as screenshots or recordings where UI changes are involved.

Identify and Resolve Review Bottlenecks

Delays in the review process rarely spread evenly across every stage. They cluster. Tracking pickup time, review time, and time to merge as distinct metrics tells you exactly where work stalls, so you can act on the right problem.

Once you know which stage is causing friction, the fixes become clear:

- If pickup time is high, reviewer load is likely uneven. Redistributing assignments or rotating ownership can cut wait times without changing how reviews are actually conducted.

- If review time itself is long, dedicated calendar blocks for code review can help. Treating review as scheduled work instead of reactive work makes a real difference in throughput.

- If PRs go quiet after opening, set a team-wide threshold for inactivity and flag anything that crosses it. A stale PR is often just a forgotten one.

The goal is not to monitor for its own sake. Separating these metrics gives you a precise signal instead of a vague sense that things are slow.

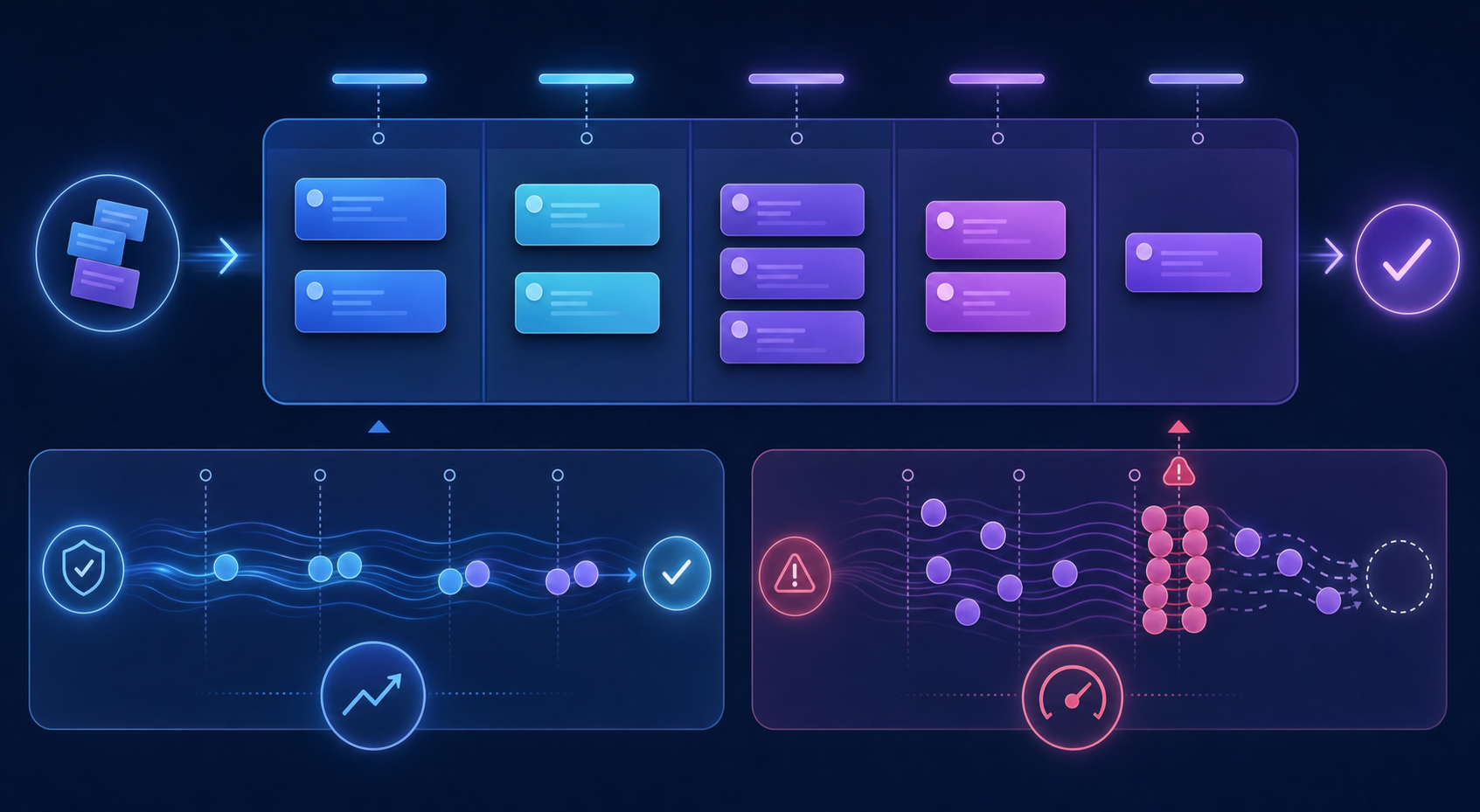

Experiment and Validate Changes in Isolated Environments

Frequent revision cycles often stem from unclear scope or untested changes landing directly in shared branches. When engineers skip isolated environments, even minor refactors can ripple into unexpected merge conflicts, forcing additional review rounds that add days to your timeline.

Feature flags and short-lived branches let teams validate changes before they touch the main codebase. This keeps PRs focused on one thing, which reviewers can assess far more quickly.

Practices That Keep PR Scope Contained

Before opening a review, consider building these habits into your workflow:

- Break work into smaller, testable units so each PR has a single, clear purpose that reviewers can assess without context-switching across unrelated changes.

- Use feature flags to ship code incrementally, letting you confirm behavior in a controlled state before the change is visible to the rest of the team.

- Run automated tests in isolated environments before requesting review, so feedback is focused on logic and design instead of catching regressions.

- Document what the PR does and does not change, giving reviewers a clear boundary and reducing the back-and-forth that inflates cycle time.

Final Thoughts on Shortening Your Review Pipeline

Most teams can reduce PR time by fixing just two things: PR size and reviewer expectations. Break changes into focused units, set an 8-hour review target, and track what slows you down. Automation handles the low-level checks while your team focuses on logic and design. The improvements show up in days, not quarters.

FAQ

What's the ideal PR size to reduce review time?

Keep PRs under 225 lines of code if you want elite-level review speed. Research shows that elite teams stay below this threshold, while strong teams stay under 400 lines, and each PR should contain one logical change, not mix refactors with feature work.

How long should PR reviews actually take?

Set a team target of 8 business hours for first review on 95% of PRs. The median across organizations is 15 hours (nearly two business days), so setting this expectation and tracking it will cut your cycle time in half.

AI code review tools vs manual reviews: which is faster?

AI tools cut PR time by catching bugs and style issues before human reviewers open the diff, with developers completing tasks up to 50% faster according to McKinsey research. Manual reviews still matter for architecture and business logic, but AI handles the repetitive checks that slow approvals.

Can I reduce PR time without adding more reviewers?

Yes—limit work in progress to two active PRs per engineer before they can open a new one. This creates a pull-based system where finishing takes priority over starting, clearing bottlenecks without changing team size.

When should I use feature flags instead of long-lived branches?

Use feature flags when you need to validate changes incrementally without blocking shared branches. This keeps PRs focused on single changes that reviewers can assess quickly, cutting down the revision cycles that add days to your timeline.