Most teams think they’ve figured out how to test PRs before merge because their CI/CD pipelines run unit tests and linters. But here’s what actually happens: tests pass, code merges, and then product teams uncover interaction bugs or UX issues no automated test could catch. The real question isn’t whether you’re testing before merge; it’s whether you’re testing the right things with the right people. Technical tests validate code, but someone still needs to confirm that the code solves the actual problem.

TLDR:

- Testing PRs before merge catches bugs while changes are isolated, keeping main branches stable.

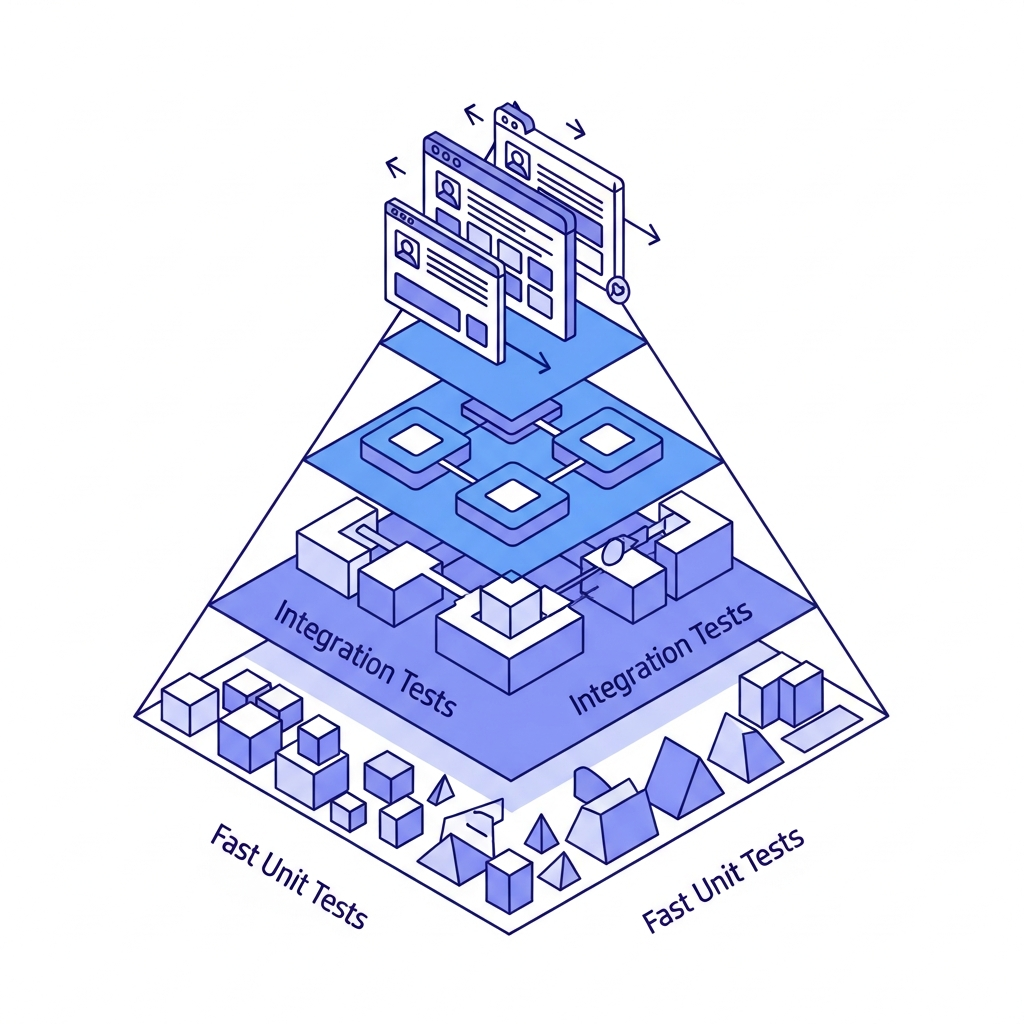

- Run unit tests first (seconds), then integration tests, and end-to-end tests where possible under 10 minutes.

- PRs under 200 lines get reviewed 3x faster and reduce test suite runtime by 60-70%.

- Isolated environments per PR reduce conflicts when multiple developers test simultaneously.

- Some modern systems can connect sandboxes to your real codebase so product teams validate changes pre-merge.

What Pre-Merge Testing Is and Why It Matters

Pre-merge testing validates code changes before they integrate into your main branch. Instead of finding bugs after merge, you catch them while the pull request is still open. This moves quality control left in your development workflow.

The difference matters more than you might think. Post-merge testing happens after code reaches the main branch, when failures block other developers and require emergency fixes. Pre-merge testing isolates risk to individual branches, keeping the main branch stable and deployable.

The stakes have grown with deployment velocity. The continuous delivery market is projected to grow from $5.27 billion in 2025 to $6.5 billion in 2026 at a 23.4% CAGR, with 82% of teams now deploying weekly but losing an average of 7 hours per week to AI-related inefficiencies, with verification bottlenecks being a primary culprit.

Pre-merge testing helps reduce this by validating changes before they cascade through your team's workflow.

Types of Pre-Merge Tests You Should Run

Different test types catch different problems, and running them in the right order saves time. Start with unit tests that verify individual functions. They finish in seconds and catch basic errors before slower tests run.

Integration tests come next to check how components interact. These validate database connections and API calls. They take longer but catch problems that unit tests miss.

End-to-end tests simulate real user behavior. Run them pre-merge only if they finish under 10 minutes. Longer suites should run a subset before merge and the full version after.

Static analysis and linters catch style violations and potential bugs without executing code. They run instantly and belong in every pre-merge check.

Security scans identify vulnerable dependencies. Block merges on critical findings but allow low-severity issues through to avoid bottlenecks.

Performance tests usually run post-merge due to infrastructure needs. The exception: test critical paths like authentication before merging changes that affect them directly.

| Test Type | Execution Time | What It Catches | Pre-Merge Recommendation |

|---|---|---|---|

| Unit Tests | Seconds | Function-level bugs, logic errors | Run on every PR |

| Integration Tests | 1-5 minutes | Component interaction issues, API failures, database connection problems | Run on every PR |

| End-to-End Tests | 5-30 minutes | User flow bugs, UI interaction problems | Run subset if under 10 minutes, full suite post-merge |

| Static Analysis | Instant to seconds | Code style violations, potential bugs, type errors | Run on every PR |

| Security Scans | 1-3 minutes | Vulnerable dependencies, security risks | Block merge on critical findings only |

Setting Up Automated Testing in CI/CD Pipelines

Automation makes pre-merge testing mandatory instead of optional. When a pull request opens, your CI/CD pipeline triggers test suites without manual intervention.

GitHub Actions users should create a .github/workflows/test.yml file that executes on pull request events. Define which branches trigger tests and specify test commands. The configuration includes checkout steps, dependency installation, and test execution.

GitLab CI uses a .gitlab-ci.yml file with a test stage that runs on merge request creation. Set rules controlling job execution based on file changes or branch patterns.

Target specific pull request events: opened, synchronized (new commits), and reopened. Skip draft PRs to conserve compute resources.

Split test suites across multiple jobs. Running unit tests, integration tests, and linters concurrently can reduce total runtime by 60-70%.

Configure branch protection rules blocking merges when tests fail, preventing broken code from reaching your main branch. GitHub's Octoverse 2025 report found that repositories with AI-assisted review had 32% faster merge times and 28% fewer post-merge defects compared to repositories using only human review.

Local Testing before Pushing Your Pull Request

Running tests locally before pushing code prevents failed builds from reaching your CI pipeline. Your workstation becomes the first line of defense against broken code.

Start by running the same test commands your CI pipeline executes. If your pipeline runs npm test, run it locally first. This catches failures immediately instead of finding them 5 minutes later in a remote build.

Pre-commit hooks help automate this step. Git hooks execute scripts before commits finish, triggering on specific events like commit attempts. Install a tool like Husky to configure hooks that run linters and fast-running tests. If tests fail, the commit aborts and you fix issues before they leave your machine.

Focus hooks on speed. Run only unit tests and linters that finish under 30 seconds. Slow hooks train developers to skip them with --no-verify flags.

Test in your local development environment too. Start your application, click through affected features, and verify changes work as expected. This catches visual bugs and interaction problems that automated tests miss.

Local testing reduces CI/CD costs and speeds up feedback. Failed builds consume compute minutes and delay merge windows. Catching problems before pushing removes these wasted cycles.

Testing Pull Requests in Isolated Environments

Shared staging environments create bottlenecks when multiple pull requests compete for the same resources. One developer's database migration breaks another's feature test. Changes conflict, test results become unreliable, and teams waste time coordinating access.

Isolated environments solve this by provisioning dedicated instances per pull request. Each PR gets its own database, application server, and dependencies. Tests run against realistic infrastructure without interference from parallel work.

Container orchestration makes this practical. Docker Compose definitions spin up full application stacks in minutes. Kubernetes namespaces create isolated clusters that mirror production topology. Cloud providers offer ephemeral environments through infrastructure-as-code templates.

Preview environments take isolation further by generating unique URLs for each PR. Stakeholders interact with actual changes before merge, catching integration issues that automated tests miss.

Code Review Best Practices for Pull Requests

Code review acts as the final validation step before merging, but delays can offset gains from automated testing.

LinearB's 2025 benchmarks show median review cycle time is 24-48 hours, with only the top 10% of teams achieving sub-4-hour first reviews and sub-12-hour merge times.

Keep PRs under 400 lines of code to speed reviews. Larger changes trigger procrastination because reviewers struggle to find time blocks. Split features into smaller, logical chunks that maintain functionality.

Write clear descriptions covering what changed and why. Include screenshots for UI updates, migration commands for database changes, and capture flows for complex user interactions.

Assign reviewers based on expertise and availability, tracking assignments in tools like Linear. For urgent fixes, ping specific reviewers directly through Slack instead of waiting for volunteers.

Frame feedback as questions when possible: "Could this function handle null values?" invites discussion while avoiding friction. Focus on correctness, security, and maintainability over style issues that linters catch.

Handling Failed Tests and Merge Conflicts

Failed tests signal problems early, but fixing them requires reading CI logs correctly. Start with the summary section showing which test suites failed. Scroll to the first failure and ignore cascading errors that stem from the same root cause.

Look for assertion messages, stack traces, and exit codes. Flaky tests that pass on retry usually indicate timing issues or shared state between test cases. Consistent failures point to actual bugs in your changes.

Merge conflicts need resolution before tests can run. Git marks conflicting sections with <<<<<<<, =======, and >>>>>>> markers. Understand both versions before choosing which to keep. When in doubt, discuss with the author of the conflicting commit.

Rebase creates a linear history by replaying your commits on top of the target branch. Merge preserves full history and handles complex conflicts better, making it preferable for long-lived branches or when multiple developers contributed.

Fix failures quickly to avoid blocking your work. If resolution takes longer than expected, mark the PR as draft to signal it's not ready for review attention.

Optimizing Pull Request Size for Faster Testing

Large pull requests slow everything down. Testing takes longer because there's more code to validate. Reviewers struggle to maintain focus across 800+ line changes. Defects hide in the noise.

The solution is counterintuitive: ship more frequently in smaller increments. PRs under 200 lines get reviewed three times faster than those exceeding 400 lines. Test suites complete quicker because fewer changes mean narrower failure surfaces.

Break features into vertical slices that deliver discrete value. Instead of one PR containing frontend, backend, and database changes, split them into sequential merges that can be validated through prototypes. Each maintains logical coherence while staying reviewable.

Use feature flags to merge incomplete work safely. Your code reaches production behind a toggle, letting you integrate continuously without exposing unfinished features. This keeps PRs small while building complex functionality iteratively.

Stack dependent PRs when changes must follow sequence. Open the second PR against the first's branch instead of main, then rebase after the first merges.

How Alloy Accelerates Pre-Merge Product Validation

Alloy closes the gap between technical validation and product validation by connecting sandboxes to your live codebase. Product managers test interface changes using natural language commands or visual edits before engineering integrations merge code. Each sandbox runs independently, letting teams validate multiple variations at once without blocking developer time.

Stakeholders interact with real product interfaces instead of static mockups, catching UX friction and flow problems that automated tests miss. Share sandbox links with customers to validate assumptions early, shrinking feedback cycles from weeks to hours.

This adds a second validation gate: engineers verify code correctness through technical tests while product teams confirm it solves actual user problems. Both happen pre-merge, reducing rework on features that reach production.

FAQs

When should I use isolated environments versus shared staging?

Use isolated environments when multiple team members are testing PRs simultaneously or when your changes involve database migrations or infrastructure modifications. Shared staging works for simple changes, but dedicated instances per PR prevent conflicts and unreliable test results.

What's the difference between rebasing and merging when resolving conflicts?

Rebase replays your commits on top of the target branch to create linear history, while merge preserves full history and handles complex conflicts better. Choose merge for long-lived branches or when multiple developers contributed to the PR.

Can I merge incomplete features without breaking production?

Yes, using feature flags lets you merge code behind a toggle that keeps unfinished functionality hidden from users. This keeps PRs small and maintains continuous integration while you build complex features iteratively across multiple merges.

Final Thoughts on Validating Code Before Integration

Shifting testing left saves time by catching issues before they spread through your codebase. Knowing how to test PRs before merge means combining automated checks with real product validation so problems surface early without slowing your team down. With tools like Alloy, teams can validate changes in real interfaces before code merges, closing the gap between code that works and code that actually solves the problem. The goal is to balance thorough testing with fast feedback so developers stay in flow and product teams stay aligned.