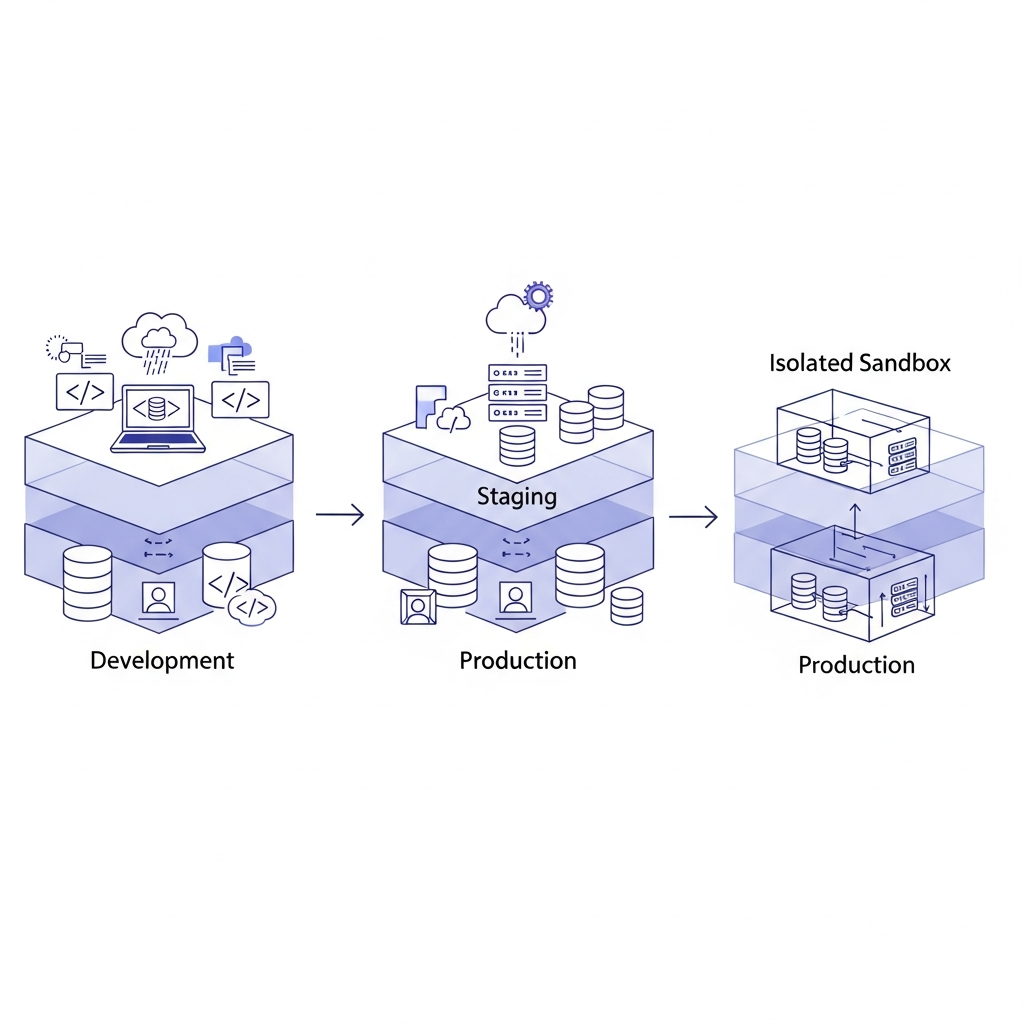

Most teams focus on whether new features function, but the real goal is to test product changes before deployment to see how they behave for real users. Bugs are only part of the risk. Confusing navigation, broken edge cases, and unexpected user behavior often appear only when people interact with a working version of the product. Running tests inside isolated environments that mirror your live application allows teams to validate changes without exposing customers to mistakes. Some newer tools even create shareable cloud sandboxes where teams can modify real product interfaces and validate flows before a release goes live.

TLDR:

- Strong pre-deployment testing practices help teams achieve change failure rates below 5% by catching bugs before users see them.

- Test in isolated sandboxes that mirror production to validate changes without risking your live product.

- Progressive rollouts limit blast radius by testing with small user groups before full deployment.

- Cloud environments let non-technical teams test real product changes and gather feedback in minutes.

- Some modern tools create shareable cloud sandboxes where you modify your actual product using natural language.

Why Pre-Deployment Testing Matters

Deployment failures break trust, burn budgets, and derail roadmaps.

When untested changes hit production, the damage spreads fast. Engineering teams drop everything to roll back or patch. Customer support fields complaints. Revenue-generating features go dark. Stakeholders lose confidence in your release process.

High-performing teams often maintain change failure rates below 5%, according to DevOps Research and Assessment (DORA) benchmarks. That's not luck. It's the result of catching problems before they reach users.

Every production incident carries hidden costs. There's the immediate firefighting: engineers pulled from planned work, war rooms convened, post-mortems written. Then come the secondary effects: delayed features, cautious stakeholders, and slower iteration velocity as teams add process to prevent repeats.

Pre-deployment testing closes the gap between "this worked on my machine" and "this works for our users." It gives you the confidence to ship faster because you've already validated changes in conditions that mirror production. You catch breaking changes, performance regressions, and edge cases before they become incidents that wake up your on-call engineer at 3 AM.

The Cost of Skipping Pre-Deployment Validation

The price of shipping untested changes shows up in your budget faster than you think.

Direct costs hit immediately. There's the engineering time to diagnose and fix production issues, typically pulling 3-5 developers off planned work for hours or days. Customer support scales up to handle the flood of complaints.

If the failure affects critical flows like checkout or authentication, you're measuring downtime in lost revenue per minute. According to recent data, the average cost of IT downtime now exceeds $14,000 per minute for midsize businesses and can reach $23,750 per minute for large enterprises, with over 90% reporting costs exceeding $300,000 per hour. In manufacturing particularly, cyber incidents cause downtime averaging over $200,000 for small to mid-sized companies, with enterprise-scale losses escalating into tens of millions of dollars.

Then come the delays. Software deployments were 26% more likely to be delayed than delivered early, with delays creating significant operational and engineering costs for organizations. Delays hold deployment schedules up by an average of 3.8 months. That's nearly a quarter lost to problems that should have been caught in testing.

The hidden costs hurt more. Teams become risk-averse, adding review layers that slow future releases. Product roadmaps stall while you fix what broke. Customers who hit bugs churn at higher rates than those who never experience problems. Your engineering team's morale tanks when they spend weeks firefighting instead of building.

You're either paying for testing before deployment or paying for incidents after. The second option always costs more.

Core Testing Types for Pre-Deployment Validation

Different testing types catch different problems. Knowing which to run when keeps you from wasting time on redundant checks or missing gaps that cause production fires.

Functional testing verifies that features work as intended. You're checking user flows, button clicks, form submissions, and data displays. This catches broken logic, UI bugs, and regressions in existing behavior. Run these tests whenever code changes affect user-facing functionality.

Integration testing validates how different parts of your product work together. APIs need to communicate correctly. Database queries need to return accurate data. Third-party services need to respond as expected. This catches miscommunications between services that functional tests miss because they often mock dependencies.

Performance testing reveals how changes affect speed and scale. Load times, response rates, database query performance, and memory usage all matter. A feature might work perfectly for one user but collapse under realistic traffic.

Security testing finds vulnerabilities before attackers do. Authentication flows, data access controls, input validation, and API endpoints all need scrutiny. This catches exposed data, broken permissions, and injection risks.

| Testing Type | Purpose | What It Catches | When to Run |

|---|---|---|---|

| Functional Testing | Verifies features work as intended | Broken logic, UI bugs, regressions in existing behavior | Whenever code changes affect user-facing functionality |

| Integration Testing | Validates how different parts work together | API miscommunications, database query issues, third-party service failures | When services or components interact or dependencies change |

| Performance Testing | Reveals impact on speed and scale | Slow load times, poor response rates, memory leaks, scalability issues | Before releases affecting critical paths or high-traffic features |

| Security Testing | Finds vulnerabilities before attackers | Exposed data, broken permissions, injection risks, authentication flaws | On all authentication flows, API endpoints, and data access changes |

Setting Up Isolated Test Environments

Test environments only work if they accurately reflect what users will experience. The closer your testing conditions match production, the fewer surprises you'll face after deployment.

Development environments are where engineers write and iterate on code. These are typically local machines or personal cloud instances. They're fast and flexible but often drift from production configurations. Database schemas get out of sync. Feature flags differ. API versions mismatch.

Staging environments replicate production as closely as possible. Same infrastructure, same configurations, same data structures. This is where you run your final validation before release. Staging catches environment-specific issues that never appear in development because the setup was too different.

Isolated sandboxes let multiple teams test simultaneously without interfering with each other. Each sandbox gets its own database state, feature flag settings, and user sessions. You can test conflicting changes in parallel without one breaking the other's validation work.

Mirroring production means matching more than just code. You need similar data volumes, realistic user behavior patterns, and equivalent third-party service responses. Testing against 100 sample records won't reveal the performance issues that surface with 10 million.

Building a Pre-Deployment Testing Checklist

Checklists prevent the mistakes that happen when you're rushing to ship and assume you've covered everything.

A pre-deployment checklist creates consistency. Every release goes through the same validation steps, regardless of who's deploying or how urgent the change feels. This removes the "I thought someone else checked that" failures that cause production incidents.

Start with code review.

Code review reduces production bugs by 60-90%, and research from Basili and Selby found it catches 80% more bugs per hour than testing alone, making it one of the most efficient bug detection methods.

Another set of eyes catches logic errors, security gaps, and implementation issues that the original developer missed. Review should verify both functionality and code quality before anything moves forward.

Run your full automated test suite. Unit tests, integration tests, and end-to-end tests all need to pass. If tests are failing or skipped, you're gambling on whether those code paths work correctly. Fix or update tests before deploying, not after.

Verify environment configurations match across development, staging, and production. Check environment variables, feature flags, API keys, and service endpoints.

Test database migrations separately. Run them against production-scale data in staging. Verify rollback procedures work.

Run security scans on authentication flows, API endpoints, and data access patterns.

Implementing Progressive Rollout Strategies

Progressive rollouts let you test in production without risking your entire user base on a single deploy.

Canary deployments route a small percentage of traffic to the new version while most users stay on the stable release. You monitor error rates, performance metrics, and user behavior. If problems appear, you roll back before most users notice. If metrics look good, you gradually increase the percentage until everyone's on the new version.

Blue-green deployments maintain two identical production environments. You deploy changes to the inactive environment, run final validation, then switch traffic over. If something breaks, you switch back instantly.

Feature flags decouple deployment from release. You ship code to production but keep it hidden behind a flag. Turn it on for internal users first, then beta customers, then everyone. You're validating with real data and real usage patterns while controlling exposure.

These strategies work because they validate under actual production conditions. Staging environments often struggle to fully replicate real user behavior, traffic patterns, and production data quirks.

Testing Product Changes in Browser-Based Cloud Environments

Browser-based cloud environments remove the setup friction that slows down pre-deployment testing. Instead of configuring local environments, teams can spin up isolated sandboxes connected to real codebases in seconds.

Alloy creates shareable cloud sandboxes that let you modify your actual product interface using natural language. Describe the change you want to test, and the environment updates the real UI inside an isolated instance. You're working with your actual software in a safe, contained space.

This removes the bottleneck where product managers and designers need engineering help to validate every idea. Non-technical team members can test changes themselves, share links with stakeholders, and gather feedback before any code reaches production. Each sandbox runs independently, so multiple experiments can happen in parallel without conflicts.

Every sandbox is shareable via link. Stakeholders interact with working product changes, experience new flows, and provide feedback without installing anything. You're validating with behavioral realism that static designs can't provide, catching usability issues and edge cases before committing engineering resources to merge.

FAQs

What's the difference between staging environments and cloud sandboxes?

Staging environments replicate your full production setup for final validation before release, while cloud sandboxes are isolated, instantly shareable instances that let multiple teams test different changes in parallel. Sandboxes work best for early experimentation and stakeholder feedback, while staging handles your final pre-deployment checks.

Can non-technical team members validate product changes before deployment?

Yes. Browser-based cloud environments let product managers and designers test changes directly without engineering setup. Tools like Alloy allow you to modify real product interfaces using plain descriptions, share working versions via link, and gather feedback before any code reaches production.

Why do integration tests miss problems that only appear in production?

Integration tests often use mocked dependencies, test data, and simplified configurations that don't match real-world conditions. Production has actual traffic patterns, full-scale data volumes, real third-party service behaviors, and edge cases that testing environments can't fully replicate. This is why progressive rollouts catch issues staging misses.

Final Thoughts on Pre-Deployment Validation

Teams that consistently ship stable releases build a habit to test product changes before deployment in environments that reflect how the product actually behaves. Catching usability problems, broken flows, and unexpected edge cases early prevents the late-night rollbacks that drain engineering time and user trust. The most reliable process pairs automated tests with hands-on validation in realistic sandboxes where teams can interact with working product changes before code reaches production. Tools like Alloy support this approach by giving teams a way to experiment with real interfaces, validate ideas, and gather feedback in isolated environments before a release goes live.